Vector Databases: What They Are and How They Power AI

Vector databases are everywhere in AI talk. But in real systems, the best results usually come from a retrieval stack, not “a vector DB alone”.

This is a rewritten and updated version of the post (late 2025). It focuses less on code and more on:

- What vector search is (in simple terms)

- Which algorithms make it work at scale

- When a vector database is the right tool (and when it’s not)

- How to build production-ready RAG when your data lives across many silos

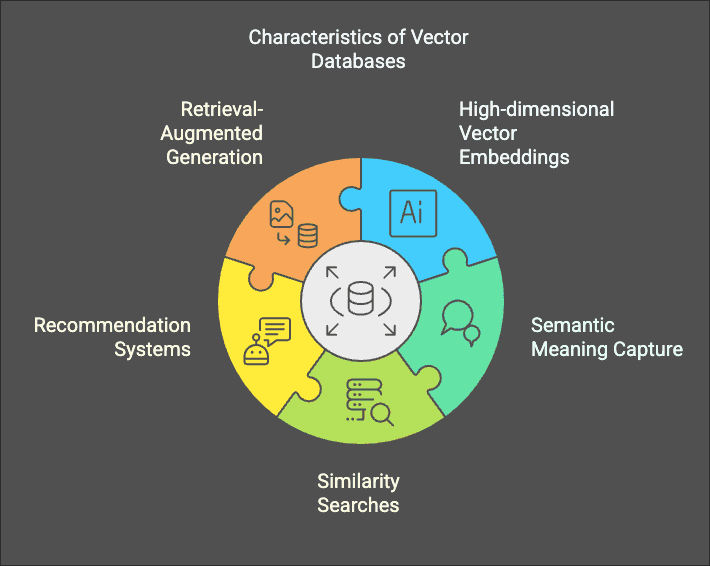

What a vector database really is

A vector database stores embeddings: lists of numbers that represent meaning. Similar things (two paragraphs about “employee onboarding”) end up close together in vector space.

At query time, you embed the question, then run a nearest neighbor search to retrieve the most similar items.

The shortest mental model

- Full-text search answers: “does the text contain the words I typed?”

- Vector search answers: “does the text mean something similar to what I typed?”

In production, you usually want both.

How RAG uses retrieval (the modern pipeline)

RAG isn’t “put docs in a vector DB”. It’s a chain with multiple quality levers.

flowchart LRclassDef store fill:#0b1220,stroke:#334155,color:#e5e7eb;classDef step fill:#111827,stroke:#6366f1,color:#eef2ff;classDef good fill:#052e1a,stroke:#22c55e,color:#dcfce7;classDef warn fill:#2d1b0b,stroke:#f59e0b,color:#fffbeb;subgraph Ingest["Ingest (offline)"]D["Docs / DBs / tickets / PDFs"]:::store --> C["Chunk + clean"]:::stepC --> M["Metadata\n(source, owner, ACL, timestamps)"]:::stepC --> E["Embed"]:::step --> V["Vector index"]:::storeC --> T["Full-text index (BM25)"]:::storeendsubgraph Query["Query (online)"]Q["User question"]:::step --> QE["Query rewrite\n(optional)"]:::stepQE --> R1["Lexical retrieve\n(BM25)"]:::stepQE --> R2["Vector retrieve\n(ANN)"]:::stepR1 --> H["Hybrid merge"]:::stepR2 --> HH --> RR["Re-rank\n(cross-encoder)"]:::warnRR --> CTX["Top context"]:::good --> LLM["LLM answer"]:::goodend

Key point: a vector DB is one piece. Retrieval quality depends on chunking, metadata, filters, hybrid, and reranking.

The algorithms behind vector search (the part people skip)

Exact nearest neighbor search is slow at scale. So most systems use Approximate Nearest Neighbor (ANN) indexing.

Here are the names you’ll see in production engines:

- HNSW (Hierarchical Navigable Small World): graph-based index, often great recall/latency, can be memory heavy.

- IVF (Inverted File Index): clusters vectors, searches within the most relevant clusters.

- PQ (Product Quantization): compresses vectors to save memory and improve speed (trade-off: accuracy).

- IVF+PQ: common combo in FAISS-style systems.

- DiskANN-style approaches: focus on large-scale search where the index lives on disk/SSD.

- ScaNN-style approaches: optimized ANN for high performance and high recall in certain setups.

Distance metrics you’ll see:

- Cosine similarity (often with normalized vectors)

- Dot product

- Euclidean (L2)

Should you always use a vector database?

No. A vector database is great when:

- Your users ask in natural language and your content is unstructured

- Keyword search fails because synonyms and phrasing vary

- You need semantic matching across many documents

But it is not always the best option when:

- The question is structured (“sum revenue by month”) → use SQL/semantic models

- The content is small enough to search with full-text + reranking

- You need “global answers” that require connecting entities across a corpus → consider knowledge graphs / GraphRAG-style approaches

Here’s a simple chooser:

flowchart TBclassDef q fill:#111827,stroke:#6366f1,color:#eef2ff;classDef a fill:#052e1a,stroke:#22c55e,color:#dcfce7;classDef n fill:#0b1220,stroke:#334155,color:#e5e7eb;Q["What kind of question is this?"]:::qQ -->|Mostly numbers, filters, joins| SQL["SQL / BI semantic layer"]:::aQ -->|Find specific phrases / compliance clauses| FT["Full-text search (BM25)"]:::aQ -->|Natural language, fuzzy matching| VS["Vector search (ANN)"]:::aQ -->|Needs connections across many docs| KG["Knowledge graph / GraphRAG"]:::aVS --> HY["Often best: Hybrid (BM25 + vectors) + reranker"]:::aFT --> HYKG --> HY

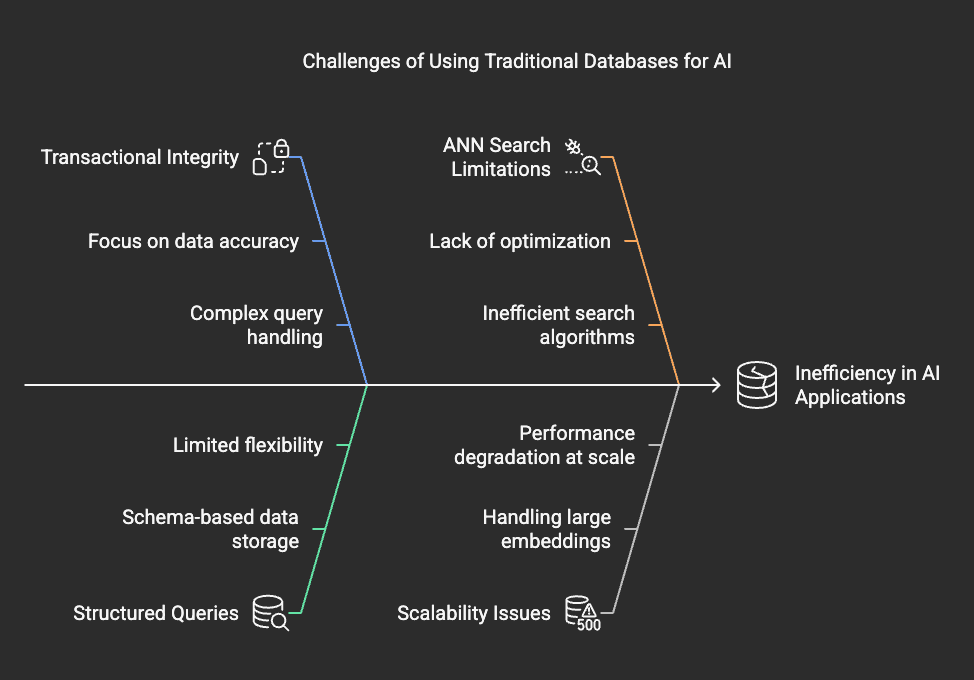

“Why not just use PostgreSQL or MongoDB?”

You can. And sometimes you should.

The practical question is not “can it store vectors?”, it’s:

- How fast can it search at your scale?

- Can it filter by metadata/ACL efficiently?

- Can it handle updates without breaking latency?

- Can you operate it reliably (backup, monitoring, cost)?

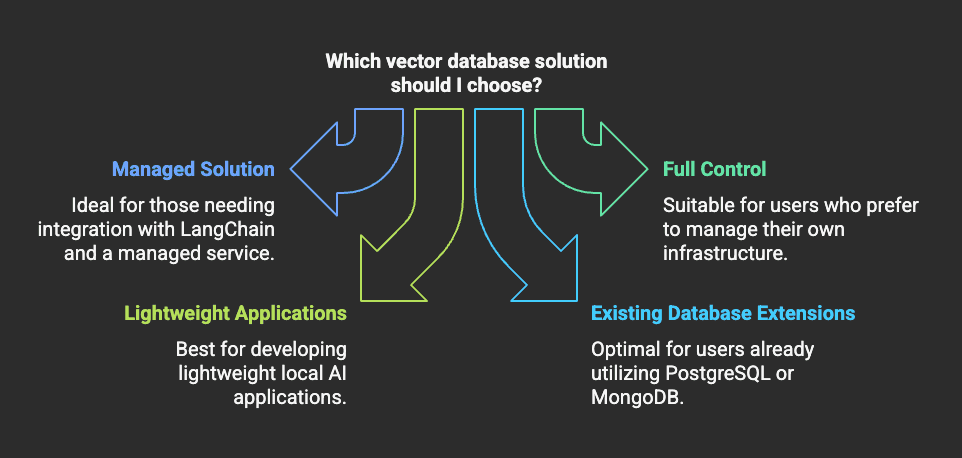

PostgreSQL (pgvector) — updated (and better than it used to be)

pgvector has improved a lot recently:

- v0.7.0 added

halfvec,sparsevec, binary vectors, quantization options, and more distance functions (useful for memory and certain workloads). (pgvector 0.7.0 release) - v0.8.0 improved filtered search and added iterative index scans to avoid “overfiltering” (where filtering kills recall), plus better HNSW build/search performance. (pgvector 0.8.0 release)

Practical guidance (2025):

- For small projects and early production (tens/hundreds of thousands of vectors, modest QPS),

pgvectorcan be a very good “single database” option. - If your workload becomes “search-first” (millions of vectors, high QPS, complex filters), you’ll often outgrow a single Postgres node and move to a dedicated search engine/vector DB.

MongoDB Atlas Vector Search

Good when your data already lives in MongoDB and you want to keep one operational system. Still apply the same rules: measure recall, latency, and filtering behavior on your dataset.

Production reality: most teams have data silos

In production, your “knowledge” is spread across:

- Docs (Notion/Confluence/Google Drive)

- Tickets (Jira/ServiceNow)

- Source code (GitHub)

- Databases (Postgres, Snowflake, etc.)

- PDFs, emails, chat logs

Your retrieval system has to unify this without creating a security nightmare.

flowchart LRclassDef sys fill:#0b1220,stroke:#334155,color:#e5e7eb;classDef step fill:#111827,stroke:#6366f1,color:#eef2ff;classDef guard fill:#2d1b0b,stroke:#f59e0b,color:#fffbeb;classDef out fill:#052e1a,stroke:#22c55e,color:#dcfce7;subgraph Sources["Data silos"]S1["Docs"]:::sysS2["Tickets"]:::sysS3["DBs"]:::sysS4["Code"]:::sysendsubgraph Pipeline["Ingestion + governance"]Conn["Connectors\n(incremental sync)"]:::stepACL["ACL + tenancy mapping"]:::guardMeta["Metadata + lineage"]:::stepRedact["PII redaction\n(optional)"]:::guardendsubgraph Retrieval["Retrieval layer"]FT["Full-text index (BM25)"]:::sysVX["Vector index (ANN)"]:::sysRerank["Reranker"]:::stependsubgraph App["AI app"]Policy["AuthZ check\nat query time"]:::guardLLM["Answer + citations"]:::outendSources --> Conn --> ACL --> MetaMeta --> FTMeta --> VXFT --> Rerank --> Policy --> LLMVX --> RerankRedact --> Meta

Best practices for multi-silo RAG

- Treat access control as data: store document-level permissions and enforce them at query time (not only at ingest time).

- Use hybrid retrieval (BM25 + vectors): it improves recall for exact terms (IDs, error codes) and still handles meaning.

- Rerank your top candidates: it’s often the single biggest quality lift.

- Track freshness: incremental sync, timestamps, and clear “last updated” behavior.

- Log retrieval (not just answers): you need to know which docs were retrieved, filtered out, and used.

- Measure: recall@k, nDCG, answer groundedness, latency, and cost.

- Avoid one giant index if you have tenants/domains with different rules; shard by tenant or by domain when it helps.

Popular options (short and practical)

Instead of a long list, here’s the easy breakdown:

- Managed vector DBs (convenient ops): Pinecone, etc.

- Open-source vector engines (self-host): Milvus, Weaviate, Qdrant, etc.

- Search engines with vectors (great hybrid story): Elasticsearch/OpenSearch-style approaches

- “One stack” databases for smaller projects: PostgreSQL +

pgvector, MongoDB Atlas Vector Search - Libraries: FAISS (you embed it inside your service)

Videos to watch

Some YouTube videos don’t allow embedding (or may fail under strict privacy settings), which can show a “Video player configuration error”.

Use the direct links instead:

Keep exploring

- Hybrid retrieval (text + vectors): How vector databases power AI search

- MongoDB Atlas Vector Search Quick Start: Atlas Vector Search tutorial

- pgvector release notes: v0.7.0, v0.8.0

- A curated list of engines/tools: Awesome Vector Databases

Share

Related Posts

Legal Stuff