What AI Models to Use? Choosing the Right AI Model for Your Needs

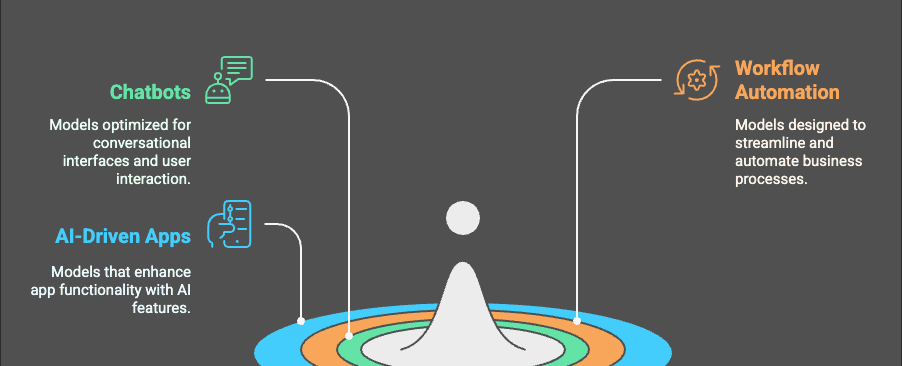

Whether you’re building chatbots, automating workflows, or developing AI-driven apps, the right AI model can make a huge difference in performance, security, and cost.

This post is a practical guide. It keeps the language simple, but it doesn’t dodge the real trade-offs.

If you want one “north star”: pick the model that makes your system reliable and affordable, not the model that wins a single benchmark.

If you’re new to evaluation, start here: OpenAI evals getting started.

A quick rule of thumb (what to optimize for)

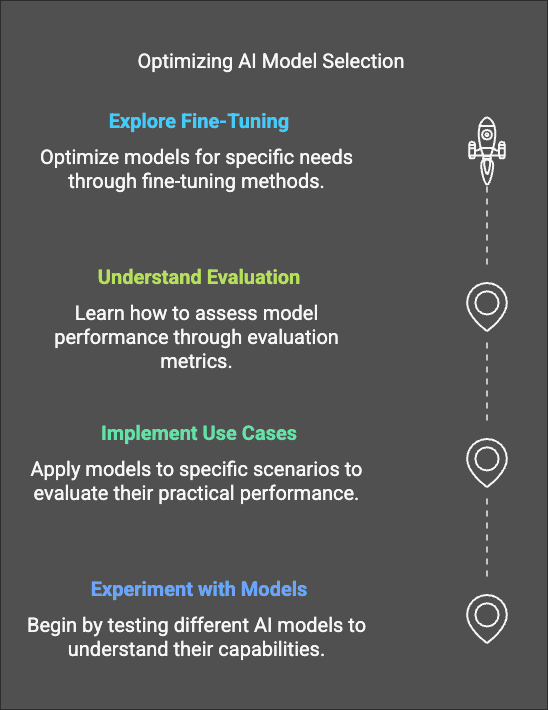

Most teams should choose models by answering these questions in order:

- What is the job? (chat, extraction, classification, code, vision, speech)

- What matters most? (latency, cost, reasoning quality, safety, multilingual, tool use)

- Where will it run? (cloud API, your VPC, on-device)

- What data can it see? (PII, IP, regulated data)

- How will you measure success? (eval set + online metrics)

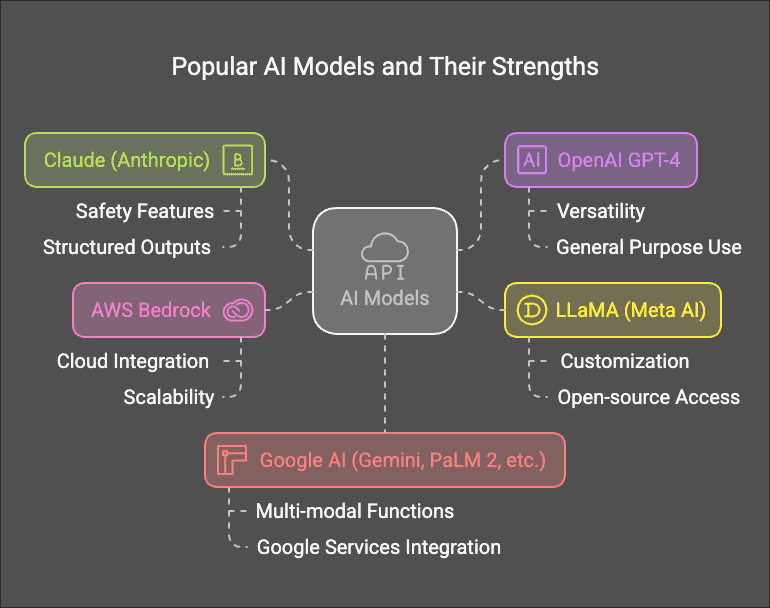

Comparing model families (a practical table)

This table is intentionally “high level”. Providers change names and pricing often, but the decision logic stays stable.

| Family | Best for | Strengths | Trade-offs | Typical deployment |

|---|---|---|---|---|

| OpenAI (GPT family) | General assistant, coding, tool calling, multimodal | Strong all-around; mature tooling | Closed; governance depends on vendor settings | Hosted API (OpenAI / Azure OpenAI) |

| Anthropic (Claude family) | Long docs, analysis, safer assistants | Strong writing + reasoning; enterprise-friendly patterns | Closed; deployment choices depend on org constraints | Hosted API / cloud marketplaces |

| Google (Gemini family) | Multimodal + Google ecosystem | Strong multimodal; tight Google integrations | Closed; ecosystem choice matters | Hosted API (Google) |

| Meta Llama (open weights) | Private deployments, customization | Run in your infra; huge community; many fine-tunes | You own ops, safety, updates | Self-host (GPU/CPU), managed hosts |

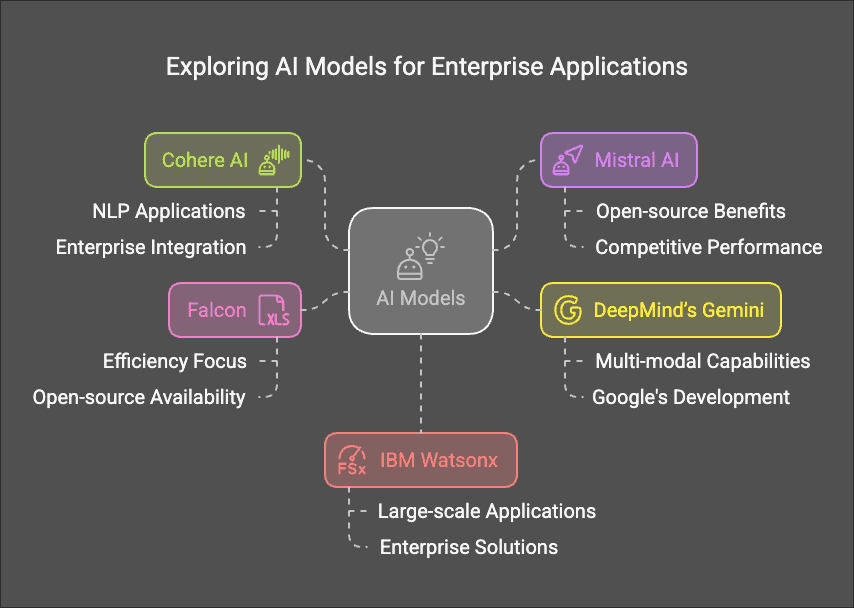

| Mistral (open + hosted) | Cost-sensitive apps, flexible deployments | Strong performance per cost; flexible options | You own some integration choices | Hosted API or self/managed hosting |

If you want a directory of many models: MetaSchool’s AI Models Directory.

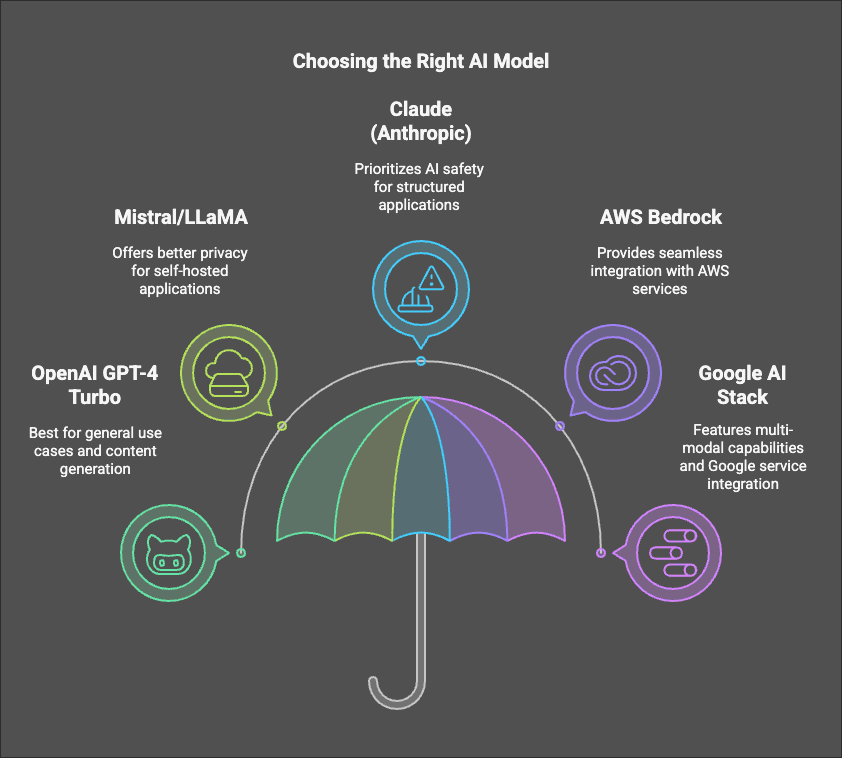

Which one should you use? (simple decision flow)

Here’s the simple breakdown:

- If you’re shipping a product next week: start with a hosted frontier model (fastest path).

- If you’re dealing with sensitive data / strict rules: use a managed enterprise setup or open models in your own infra.

- If cost/latency is the bottleneck: use smaller models, routing, caching, and distillation.

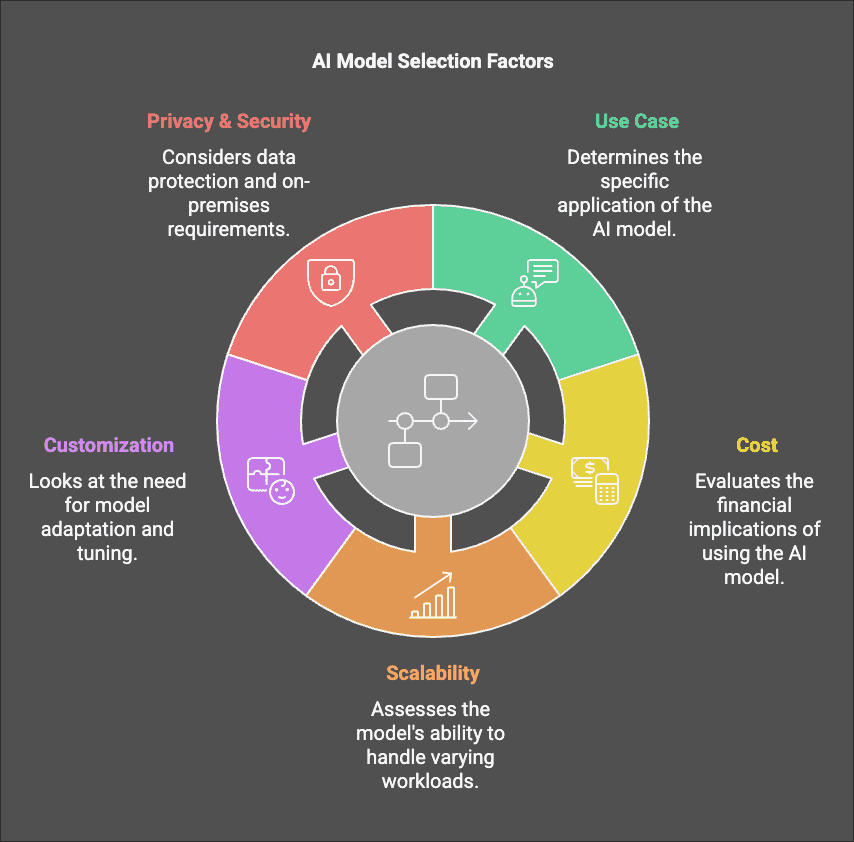

Considerations When Picking an AI Model

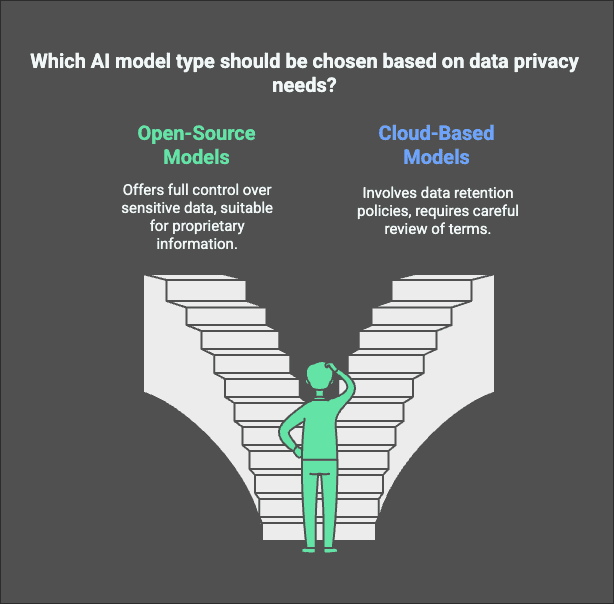

Data Privacy

When choosing an AI model, data privacy should be a key factor. If you’re working with sensitive or proprietary data, you might prefer an open-source, self-hosted model like LLaMA or Mistral to ensure full control over data.

Cloud-based models like OpenAI’s GPT-4 or AWS Bedrock handle data differently, often with retention policies or logging mechanisms, so be sure to review their documentation and terms before implementation.

Foundation models, fine-tuning, and where “distilled models” fit

Foundation models (what they are)

A foundation model is trained on huge datasets to learn general language/vision patterns. It’s not trained for your specific company task yet—it’s a general engine.

Most LLMs start with self-supervised learning:

- predict the next token

- minimize cross-entropy loss

- optimize with gradient descent variants (often Adam/AdamW)

Distilled models (what they are and why they matter)

Knowledge distillation is “teacher → student” training:

- a large model (teacher) generates targets (answers, probabilities, reasoning traces)

- a smaller model (student) learns to imitate those targets

Why teams like distilled models:

- lower latency

- lower cost

- easier to run in private infrastructure

A canonical example is DistilBERT (a distilled version of BERT): https://huggingface.co/docs/transformers/model_doc/distilbert

Fine-tuning (how models become “yours”)

There are two common paths:

- Full fine-tuning: update all weights (expensive, heavy ops).

- Parameter-efficient fine-tuning (PEFT): update a small set of parameters (e.g., LoRA) and keep the base model mostly frozen. Docs: https://huggingface.co/docs/peft/index

In practice, many teams combine:

- a general foundation model

- a small, task-tuned model for routine jobs

- a routing layer to decide which model runs each request

How Generative AI / LLMs relate to “regular” machine learning

LLMs are still ML. They just operate at a huge scale and use a particular architecture.

Think of it like this:

- ML is the field (supervised, unsupervised, RL, probabilistic models, etc.)

- Deep learning is a subset (neural networks trained with backprop)

- LLMs are a subset (large transformer-based models trained mostly with self-supervised learning)

LLMs show up in ML systems the same way other models do:

- data → training → evaluation → deployment → monitoring → iteration

The core ideas and algorithms behind modern LLMs (high level)

If you want a mental model without a math wall:

- Transformer: the architecture (attention + feed-forward layers).

- Attention: “which tokens matter most right now?”

- Tokenization (BPE-like): how text becomes token IDs.

- Training objective: next-token prediction (most of the time).

- Decoding algorithms: greedy, beam search, sampling with temperature/top-p.

- Alignment: extra training steps to better match human preferences (often SFT + preference optimization like RLHF/DPO-style approaches).

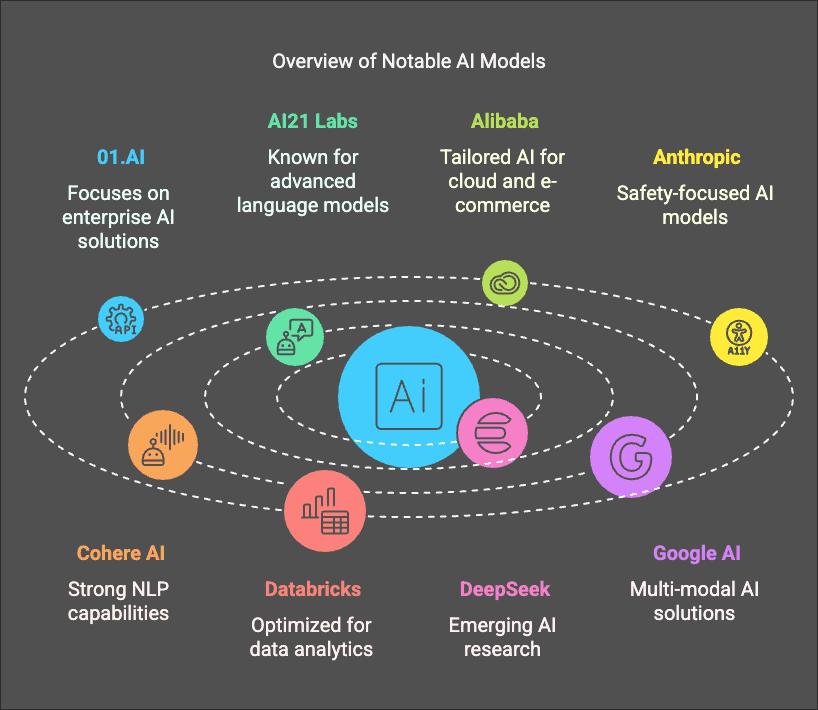

Other AI Models to Explore

This is not a complete list, as the AI landscape is constantly evolving. Here are some additional AI models worth considering:

Learning resources (courses + videos)

I’m linking instead of embedding to avoid YouTube embed errors.

Keep exploring (official docs + hands-on)

- OpenAI models docs: https://platform.openai.com/docs/models

- Anthropic Claude docs: https://docs.anthropic.com/

- Google Gemini API docs: https://ai.google.dev/

- Meta Llama: https://ai.meta.com/llama/

- Mistral docs: https://docs.mistral.ai/

- Hugging Face Transformers: https://huggingface.co/docs/transformers/index

- Hugging Face PEFT (LoRA, etc.): https://huggingface.co/docs/peft/index

Choosing the right model is an iterative process. Start simple, measure, then add sophistication: routing, caching, distillation, and fine-tuning only when you can prove the value.

Related Posts

Legal Stuff